Python stack and queue9/22/2023

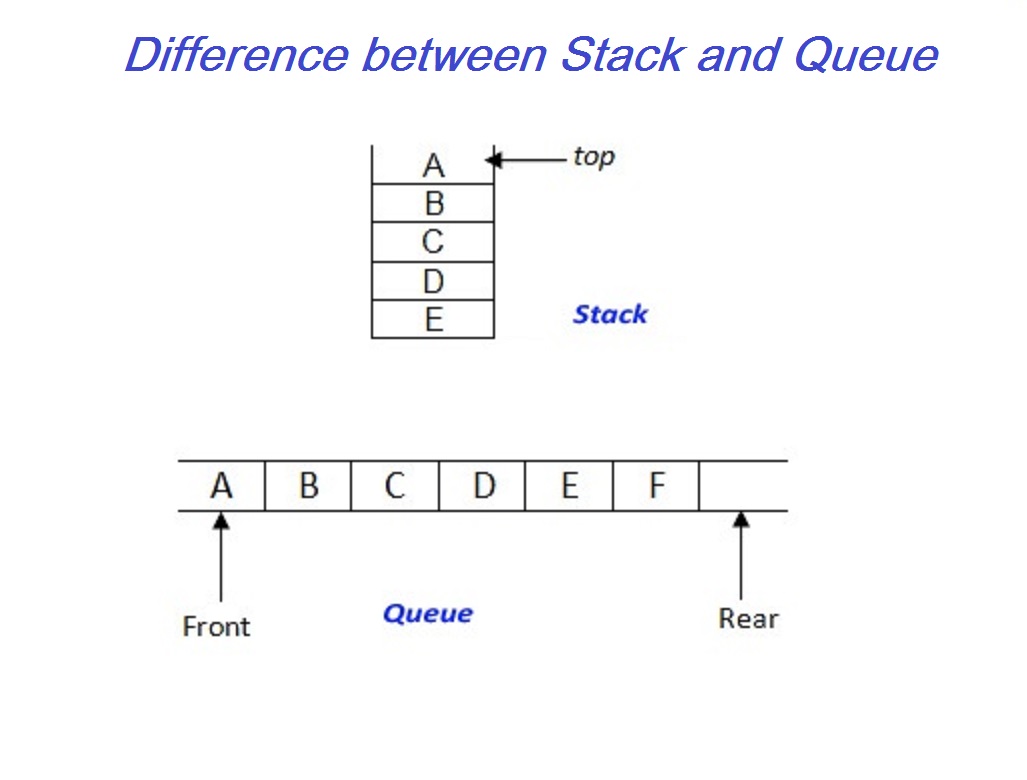

This is different from stacks which are last-in-first-out. This is called first-in-first-out or FIFO. If you think of a queue as a line of customers, a customer adds themselves to the end of the line and eventually leaves from the front of the line. The last time you went grocery shopping you likely had to wait in a line to check out. Items that are added to the back of a queue and removed from the front of the queue. Queues hold a collection of items in the order in which they were added. Or, if you want to solve it (instead of using a workaround as the time.sleep method), you could look into multiprocessing and look for information on blocking, non-blocking or asynchronous communication - I think the solution will be found there.Queues are a linear abstract data type with some key differences between stacks. I'm sure there is a reason for that, but I'm not familiar enough with multiprocessing to see that.ĭepending on your application, you could just add the appropriate time.sleep to your inner loop and see if thats enough. It seems that while it is still processing a get, the queue state is empty, and after the get is done the next item is available. This looks right! And it means that actually get does something strange.

On the other hand, if we change the time.sleep(0) in the inner q.get() loop to 1, while not q.empty(): But if we set the sleep time for the whole f_get to something like 15, we still get the same behaviour. This could mean that put is now slower than get and we have to wait. The while-condition q.empty() evaluates to True (the queue is empty) and the outer while(1) cycles. Now, if you run this for different number of rows, you will see something like this:Īs long as the DataFrame is small, your assumption that the put process is faster than the get seems true, we can fetch all 5 items within one loop of while not q.empty().īut, as the number of rows increases, something changes. The new code: import multiprocessing, time, sys In the f_get function I added a counter (and a time.sleep(0), see below) to the loop ( while not q.empty()).

First, 5 DataFrames are put into the queue without any custom time.sleep. I modified your code a bit to make it easier to see what happens. This means you see this behaviour as soon as the size of the arrays crosses a certain threshold that will be system(CPU?) dependent. I tried your code again with larger DataFrames ( 10000 or even 100000) and I start to see the same things as you do. Get <- #!!! get-process takes ONLY 1 object from the queue!!!Īny idea what I am doing wrong and how to pass also the bigger dataframe through?Īt the risk of not being completely able to provide a fully functional example, here is what goes wrong. Output for 1000rows dataframe, get-process takes only one object. Get # get-process takes all 4 objects from the queue Output for 100rows dataframe, get-process takes all objects f_get start P = multiprocessing.Process(target=f_put, args=(q,)) import multiprocessing, time, sysĭf = pd.DataFrame(*NR_ROWS, columns = myheader) I've got strange behaviour, because my code works perfectly and as expected but only for 100 rows in dataframe, for 1000row the get-process takes always only 1 object. The put-process is faster, so the get-process should clear the queue with reading all object. I have some troubles with exchange of the object (dataframe) between 2 processes through the Queue.įirst process get the data from a queue, second put data into a queue.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed